#0002 When I lost my intuition, From disruption to transformation, "Drowning in Gunk, Starving for Spark", Cryptography scales trust, How To Make Superbabies

Welcome to Constant Flux, a weekly lens on the polycrisis.

When I lost my intuition

This article got me thinking: How does intuition evolve in an AI driven world? Intuition is the brain’s fast, unconscious processing of past experiences, helping us make snap judgments. Of course AI can sharpen intuition by offering insights, but just like calculators changed how we think about arithmetic, overreliance might dull it and shift decision making away from human instinct.

From disruption to transformation

Another framework. Roger Spitz introduces the AAA Framework: Antifragile, Anticipatory, and Agility to help decision-makers turn disruption into transformation. By building systems that strengthen through shocks, anticipating change, and staying adaptable, organizations can turn uncertainty into opportunity.

“Drowning in Gunk, Starving for Spark”

The internet is filling up with junk, making it harder to find anything real. AI is learning from itself, and human content is getting more repetitive. Are we heading toward a creativity crisis, or is there still enough randomness to keep things interesting?

Jay says the internet is overloaded with human gunk, content designed to be seen rather than to inform or entertain. Clickbait, SEO padding, and engagement bait have built up, making it harder to find meaningful information. Unlike AI-generated content, which is endless and fluid, gunk sticks around, cluttering the system. https://share.snipd.com/snip/1cdcd9d7-edf2-49e7-b809-a6177fbfc01a

But Chris Lu from Google DeepMind says there is still entropy, the randomness that drives creativity. People still produce unexpected and insightful content. But as AI learns from its own outputs and human content becomes more repetitive, we risk an entropy collapse, where creativity declines and AI models reinforce the same patterns. https://share.snipd.com/snip/cd1764d7-743f-43fb-be72-0535d072f6b3

U.S. blocks its scientists from pivotal global climate report

A major hit to global collaboration when we need it most. This weakens trust in international climate science and makes it even harder to tackle climate change, biodiversity loss, and inequality. At a time when we need urgent, collective action, this only adds to the polycrisis and makes real solutions less likely.

Cryptography scales trust

I keep coming back to this. Cryptography could build trust in decentralized networks if traditional institutions fail. I mean Signal keep communication secure under censorship, Ethereum smart contracts could enable agreements without middlemen, and Carbon Coin (a la The Ministry of the Future) imagines a currency to incentivize carbon reduction. If trust becomes harder to maintain, cryptography might offer a way to keep societies resilient and adaptable in the face of overlapping crises.

How To Make Superbabies

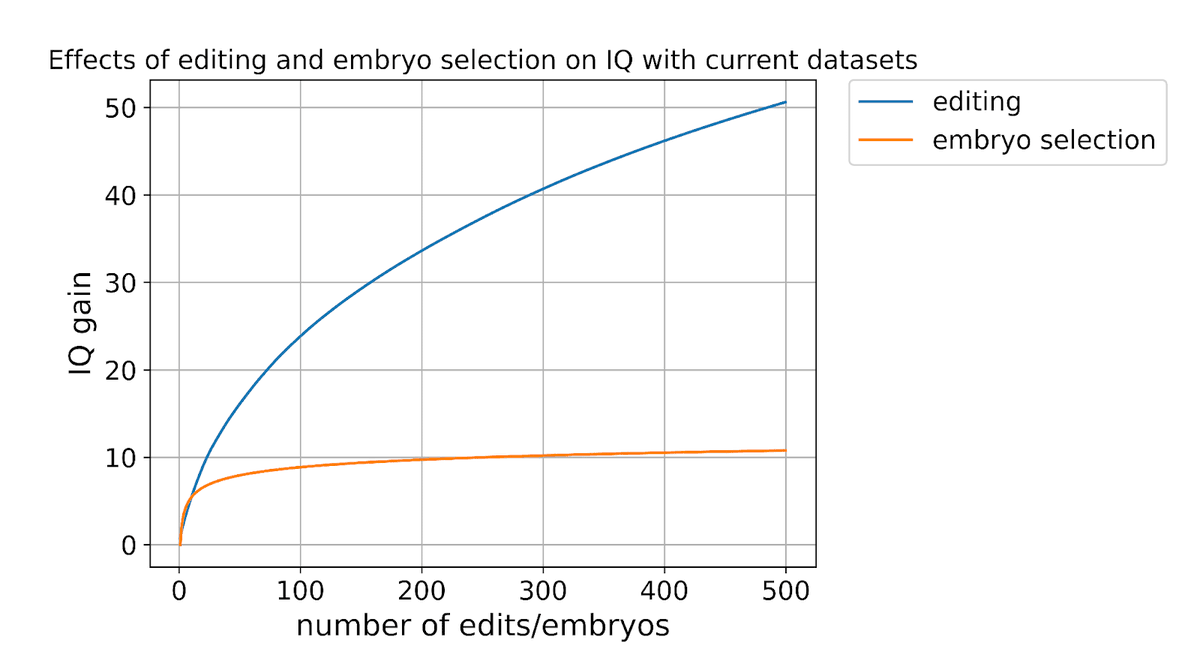

This impressive article explores aplausible near-future where genetic engineering boosts intelligence by 25–50 points, extends lifespans beyond 100 years, and eliminates diseases like Alzheimer’s and cancer. The tone is positively aggresive :-) arguing that if these advancements are possible, they should be pursued:

If we have the ability to significantly enhance intelligence and lifespan, then it seems irresponsible not to pursue it.

Immense potential of course, but as usual, so are the risks. Makes me wonder for example, what kind of society over-optimizing for intelligence might result in. It could come at the cost of creativity, emotional depth, or adaptability. Kind of reminds me of Ted Chiang’s Understand, where two individuals enhance their intelligence and become detached from human concerns. So… if intelligence keeps amplifying, does it pull us apart? Would an elite emerge, operating on a level far removed from the rest of society?

A long read at 40 minutes, there's also a shorter summary right here.